Quick Answer

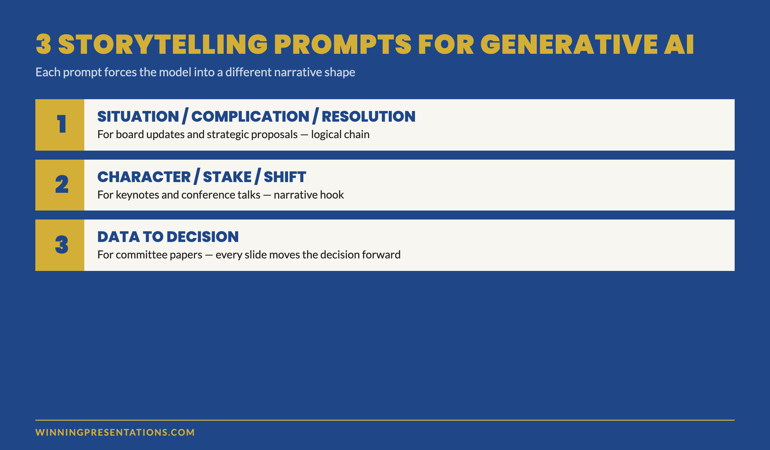

Generative AI presentation storytelling works when the prompt forces the model into a narrative structure rather than a summary. The three prompts that consistently produce usable drafts are: the situation-complication-resolution prompt, the character-stake-shift prompt, and the data-to-decision prompt. Each forces the model to choose a narrative shape before it generates copy. Without that, AI produces summaries — and senior audiences disengage from summaries.

On this page

Hadiya had been a strategy lead in a global consulting firm for eleven years. Her team produced quarterly client decks for FTSE finance directors. In April she ran an experiment: she gave ChatGPT a 22-page client report and asked it to “write a presentation that tells the story of the data.” The model produced 14 slides. Polished bullets, neat headers, clean structure. Her partner read the draft and said, “This reads like a research summary. It doesn’t tell me anything I would remember after the meeting.”

Hadiya rewrote the deck by hand. The next month she tried again — different prompt. This time the draft was usable in 40 minutes. The difference was not the model. The difference was the structure she forced into the prompt before the model wrote a word.

If your AI-drafted decks read like summaries rather than stories

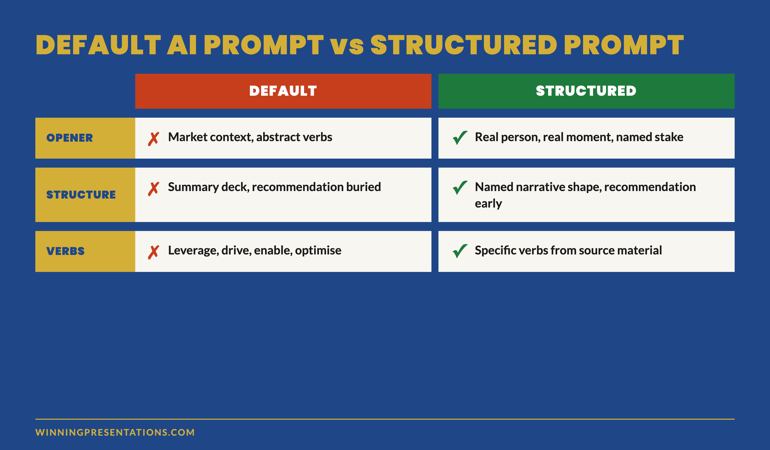

The model is not refusing to tell stories. It is defaulting to the structure most natural to a language model — paragraph-and-bullet summary — because the prompt did not ask for anything else.

Why generative AI defaults to summary, not story

Large language models are optimised for one task: predicting the next likely token given everything before it. When asked to “write a presentation,” the most likely structure across the training data is the summary deck — title, agenda, sections, bullets, conclusion. That structure dominates corporate output, so the model produces it by default.

A senior audience does not need the summary. They have read the pre-read; they have skimmed the report. What they need is the through-line — the question the data answers, the tension the analysis exposes, the decision that follows. None of that emerges from a prompt that says “write a presentation.”

The fix is not better writing on the model’s part. The fix is a prompt that names the narrative structure before the model generates a single word. Three prompts cover most senior-audience situations. Each one forces a different narrative shape into the output.

Prompt 1 — Situation, complication, resolution

Use this prompt when the audience needs to follow a logical chain from “where we were” to “where we are now” to “what we propose.” It is the structure underneath most McKinsey-style executive briefings, and it works because senior audiences are trained to listen for it.

The prompt skeleton:

PROMPT — Situation / Complication / Resolution

You are drafting a 12-slide executive presentation. Use the situation-complication-resolution structure. Slides 1–4: the situation (where the business was, supported by 3 specific data points from the source material). Slides 5–8: the complication (the new pressure or shift that disrupts the situation, supported by 2 data points and 1 named risk). Slides 9–12: the resolution (the recommendation, the expected outcome stated as a process commitment, the trip-wires, and the decision being asked of the audience). For each slide, write a 6-word headline and 3 supporting bullets of no more than 14 words each. Do not use abstract verbs (leverage, drive, enable). Use specific verbs from the source material.

The prompt does three things the default does not. It names the structure (situation-complication-resolution). It enforces evidence (specific data points from the source material). It bans the verbs that produce generic AI copy (leverage, drive, enable). The output reads as a deliberate piece of work, not a model’s average guess at what a presentation looks like.

The constraint that matters most is the verb ban. “Leverage” and “drive” are model-default verbs — they show up because they are common across the training data. Senior audiences register them as filler. A prompt that bans them forces the model to pull verbs from the source material instead. Those verbs are specific, sometimes technical, and almost always more credible.

When this prompt is the right choice

Use it for board updates, strategic proposals, and any presentation where the audience expects a logical progression from problem to recommendation. It is less effective for sales pitches, opening keynotes, or any setting where the audience needs an emotional hook before they engage with logic. For those, prompt 2 is stronger.

Prompt 2 — Character, stake, shift

The second prompt forces the model into a narrative shape: a person with something at stake, a moment when the situation changes, the decision that follows. It produces drafts that read like business stories rather than business summaries — useful for keynotes, all-hands briefings, conference talks, and any setting where the audience needs to feel the weight of the decision before they evaluate it.

PROMPT — Character / Stake / Shift

You are drafting a 10-slide presentation that opens with a real person facing a specific decision. Slide 1: name the person, their role, the moment, what was at stake. Slides 2–4: the situation as they understood it. Slide 5: the shift — the new information or moment that changed the calculation. Slides 6–8: how they responded, supported by evidence from the source material. Slide 9: what changed as a result. Slide 10: the decision the audience needs to make now. Use first or third person, not second person. No abstract verbs. No outcome guarantees — describe what the person did, not what was guaranteed to happen.

The “no outcome guarantees” line is critical. Generative AI defaults to outcome-promise language (“this approach delivered transformational results”) because that pattern is over-represented in marketing copy in the training data. Senior audiences are alert to outcome promises and discount the surrounding argument when they hear one. The prompt forces the model into process-commitment language instead.

The character requirement also blocks the model’s most common failure mode: opening with abstract market context. “In today’s rapidly evolving business environment” is the model’s default opener; it dies in the first 30 seconds in front of a senior audience. A real person at a real moment is the opposite.

Build executive slides in 25 minutes, not 3 hours

The Executive Prompt Pack — 71 prompts for ChatGPT and Copilot

- 71 ready-to-use prompts for executive presentations — story, structure, opening, recommendation, risk, Q&A prep

- Works in ChatGPT, Microsoft Copilot, and Claude — no separate setup

- Copy-paste-and-fill format — replace the bracketed fields with your context, run the prompt

- Includes the situation-complication-resolution and character-stake-shift prompts in full

The Executive Prompt Pack — £19.99, instant access, lifetime use.

Get the Executive Prompt Pack →

For busy professionals who want to create sharper, more strategic PowerPoint presentations.

When this prompt is the right choice

Use it for any presentation that opens with the audience cold — keynote, conference talk, sales pitch, internal kick-off — where the first 90 seconds need to earn the right to the rest. It is also the right prompt for change communications, where the human dimension is what carries the message past intellectual agreement into emotional acceptance.

Less suited to credit committee papers and quarterly board updates, where the audience already has the context and just wants the logic. For those, prompt 1.

Prompt 3 — Data to decision

The third prompt is for the situation senior professionals encounter most often: 30 pages of data that need to become a 12-slide deck that drives a single decision. Default AI prompts produce a “data summary deck” with a recommendation slide near the end. This prompt produces a “decision deck” with the data working as evidence, not as content.

PROMPT — Data to Decision

You are drafting a 12-slide decision deck. The audience must make a single decision at the end of the meeting. Slide 1: state the decision being asked of the audience in one sentence. Slide 2: the recommendation. Slides 3–6: the four most relevant data points that support the recommendation, one per slide. Each data slide must include the headline number, the source, the time period, and a one-sentence interpretation. Slides 7–9: the two or three counter-arguments and the response to each. Slide 10: the trip-wires that would force a re-vote. Slide 11: the resolution being put. Slide 12: the next decision point on the agenda. Do not include market context. Do not include backstory. Do not summarise — every slide must move the decision forward.

The instruction “do not include market context” sounds aggressive. It is necessary because market-context slides are the model’s most common form of padding. Senior audiences in a decision meeting do not need market context; they have it. A deck that opens with market context tells the audience the presenter does not know what they need.

The four-data-points constraint is also load-bearing. AI without a numeric constraint will produce 8–12 data points and trust the audience to pick the relevant ones. Senior audiences read that as analytical laziness. Four data points, with the analysis already done in the slide selection, reads as senior judgement.

For senior leaders running this prompt for the first time, the result is often disorienting — the deck looks shorter than expected, with no agenda slide, no executive summary, no closing thank-you. That is the point. It is a working document, not a conference talk. The room sees the work in the discipline of what was excluded.

The editorial pass: making AI output sound like you

Even with a strong prompt, AI output reads as AI output without an editorial pass. The model produces text that is grammatically perfect, lexically broad, and tonally even — and that combination is exactly the signature senior audiences register as machine-drafted. A short editorial pass changes the read.

Four moves that take 15 minutes and remove most of the AI signature:

Replace three abstract verbs with specific ones from the source material. Search the draft for “leverage,” “drive,” “enable,” “optimise,” “transform” — replace each with the verb the source document uses. The shift from generic to specific lifts the credibility of the surrounding sentence.

Cut the opening adjective on every bullet. AI defaults to “robust framework,” “comprehensive analysis,” “strategic approach.” Senior audiences treat opening adjectives as filler. Cut them. The bullet reads sharper.

Add one specific number that did not come from the source material. A specific time or duration (“17 minutes into the meeting”), a specific date (“between October and December”), a specific small number (“three of the seven options”) — one of these per page anchors the reader and signals the writer was actually present in the analysis.

Rewrite the recommendation in your own voice. The recommendation slide is the one the audience remembers. AI’s default recommendation language sounds borrowed from a McKinsey report. Yours should not. Read the AI draft, close the file, write the recommendation from scratch. Compare. Use whichever sounds like you.

The editorial pass takes 15 minutes on a 12-slide deck. It is the difference between an AI-drafted deck and an AI-drafted deck the audience does not register as AI-drafted. For senior leaders integrating AI into their workflow, this pass is the discipline that separates time saved from credibility lost.

Want the longer story behind these prompts?

If narrative structure is the gap — not just the prompt — the Business Storytelling Mini-Course covers the frameworks behind these three prompts: situation-complication-resolution, character-stake-shift, and data-to-decision. £29, instant access.

Get the Business Storytelling Mini-Course →

Turn numbers into stories that move executive decisions.

Frequently asked questions

Which model produces the best storytelling drafts — ChatGPT, Copilot, or Claude?

For these three prompts, the difference between the major models is smaller than the difference between a structured prompt and an unstructured one. ChatGPT-5 and Claude Sonnet 4.6 produce slightly more usable drafts on the character-stake-shift prompt because both are stronger at narrative voice. Copilot is stronger on the data-to-decision prompt because it can pull from your own files. None of them produce decision-grade copy without the editorial pass.

How much source material should I paste into the prompt?

For the situation-complication-resolution and data-to-decision prompts, paste the full source — most modern models handle 50+ page documents in a single prompt. For the character-stake-shift prompt, paste only the section about the character and the moment, plus the surrounding context. Pasting more dilutes the focus and produces a draft that wanders. Quality of source material in produces quality of structure out.

Can I run all three prompts on the same source and pick the best draft?

You can, and senior leaders increasingly do. The three drafts read very differently and the comparison clarifies which structure suits the audience. Run all three, compare openers and recommendations, then pick one and apply the editorial pass. Total time: about 60 minutes for a 12-slide deck — substantially less than writing from scratch, and the structural variety is itself a useful reasoning tool.

Does this work for slides themselves, or just the narrative copy?

The prompts produce headline-and-bullet copy ready to drop into slide templates. The visual layout, charts, and design treatment still need to be done in PowerPoint or Keynote — generative AI image and chart output for executive presentations is not yet at a quality that survives a senior audience. The narrative copy is where the time saving sits; the visual layer remains a manual step.

The Winning Edge — weekly newsletter for senior presenters

One framework, one micro-story, one slide pattern — every Thursday morning, ten minutes’ read. For senior professionals presenting to boards, investment committees, and executive sponsors who want my best material before it appears anywhere else.

Not ready for the full prompt pack? Start here: download the free Executive Presentation Checklist — a one-page reference for the structural questions every executive deck must answer before the meeting.

For the matched workflow article, see ChatGPT and Copilot together — the two-tool stack that builds executive decks faster than either alone.

Mary Beth Hazeldine — Owner & Managing Director, Winning Presentations Ltd. With 24 years of corporate banking experience at JPMorgan Chase, PwC, Royal Bank of Scotland, and Commerzbank, she advises senior professionals across financial services, healthcare, technology, and government on integrating AI into executive presentation workflows.