If you are looking for a pitch deck template for startups that holds up in front of an investor — not a free PowerPoint download you have to redesign before sending — The Executive Slide System is a structured set of 26 templates, 93 AI prompts, and 16 scenario playbooks designed for senior decision-makers. Instant download, £39, single payment.

This page explains what the system contains, how the templates apply to a startup pitch, and what differs between a generic deck on Google and one built for investor scrutiny. If you are evaluating options before downloading, the detail below is written to help you decide.

Short on time? If you would rather skip the analysis and see the template system directly, view The Executive Slide System on Gumroad — instant download, single payment, designed for senior decision-makers including investors. The remainder of this page is for founders who want context first.

Why Free Startup Pitch Templates Fail in Investor Meetings

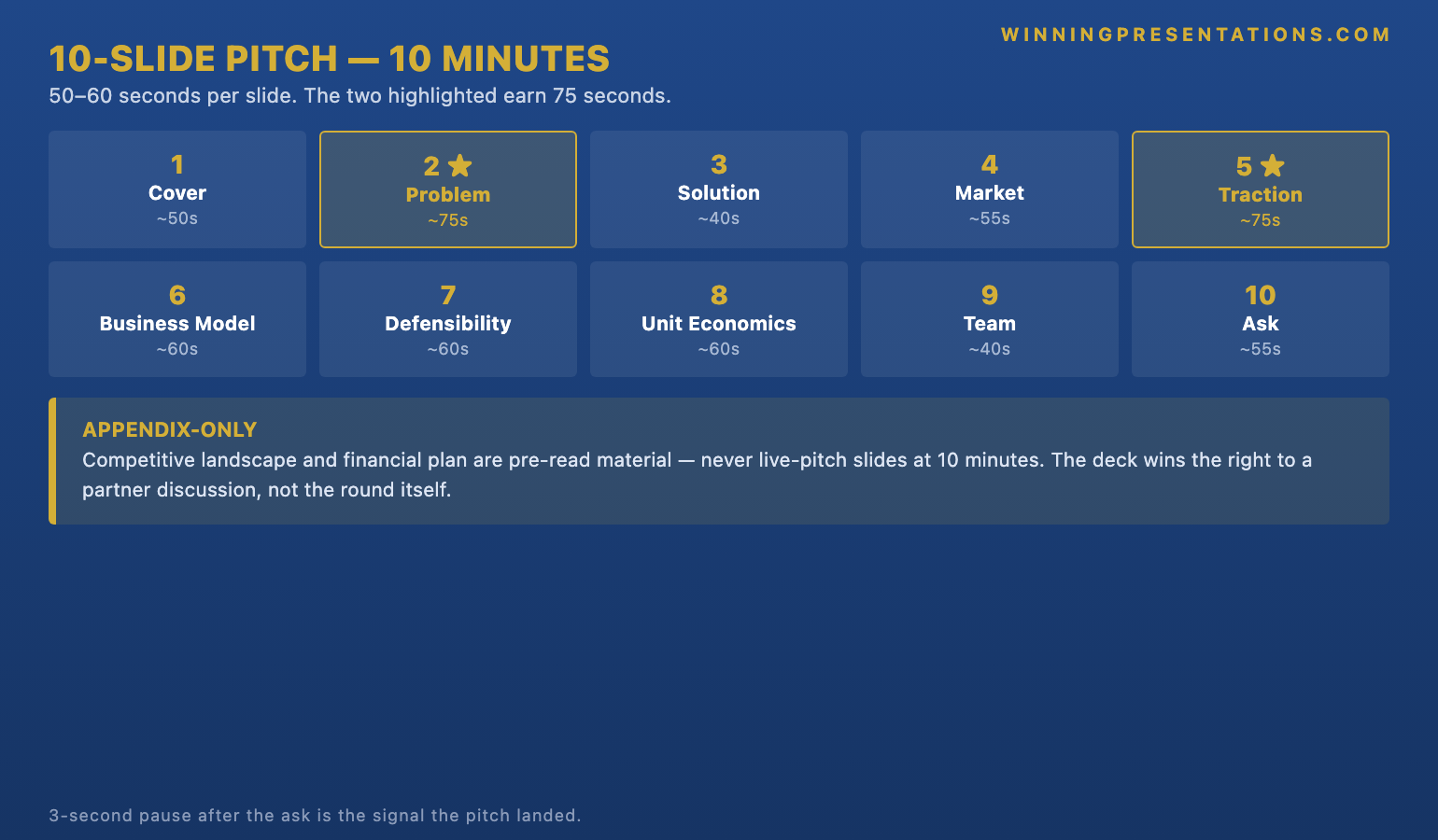

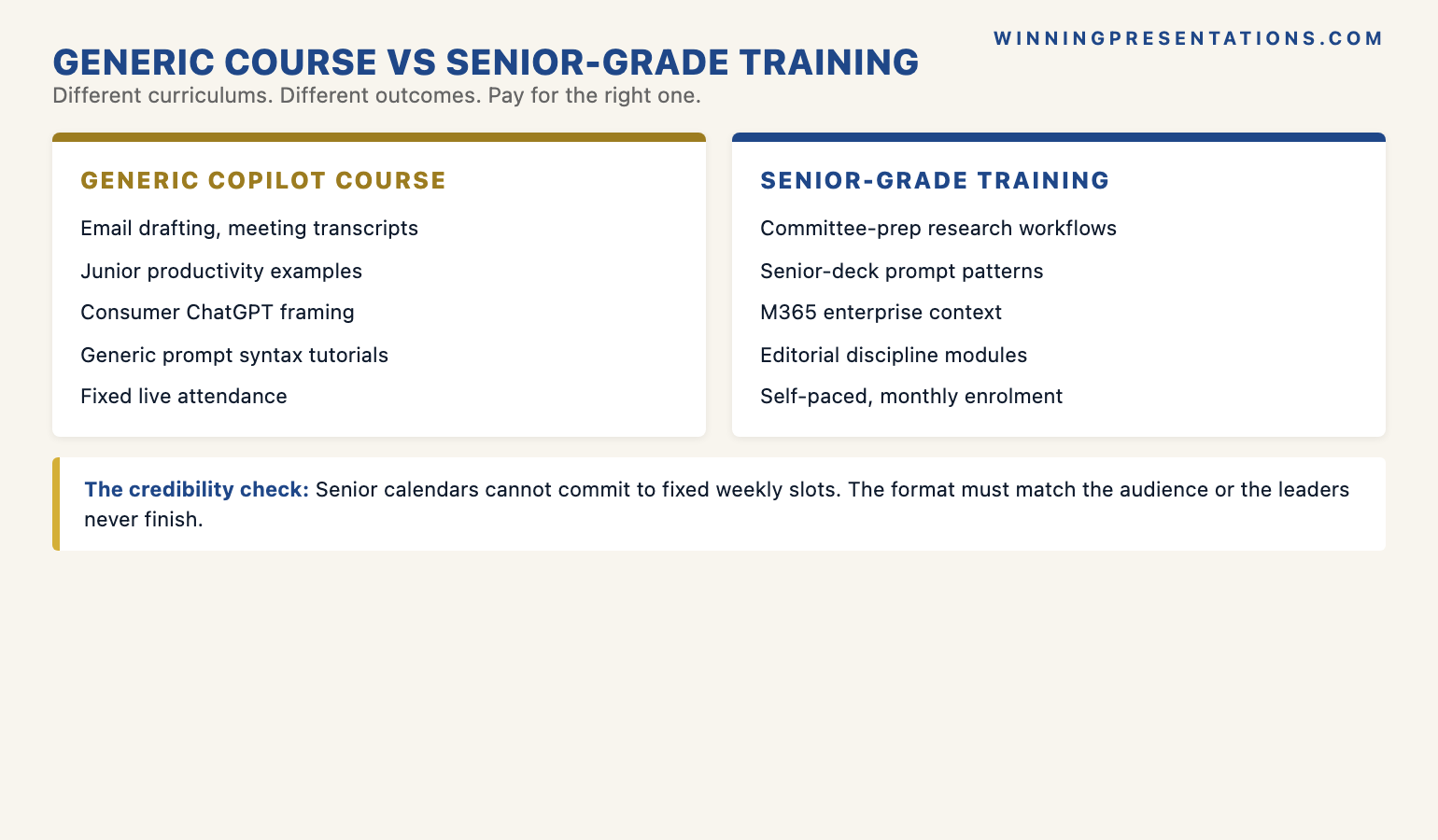

Most pitch deck templates available to download follow the same well-worn shape: title slide, problem, solution, market size, traction, team, ask. The structure is everywhere because Sequoia, Y Combinator, and Guy Kawasaki have all published versions of it. The problem is not that the structure is wrong — it is that everyone is using the same one, and investors have seen it a thousand times. By slide three, the partner across the table is half-listening, half-checking whether the numbers match their pattern.

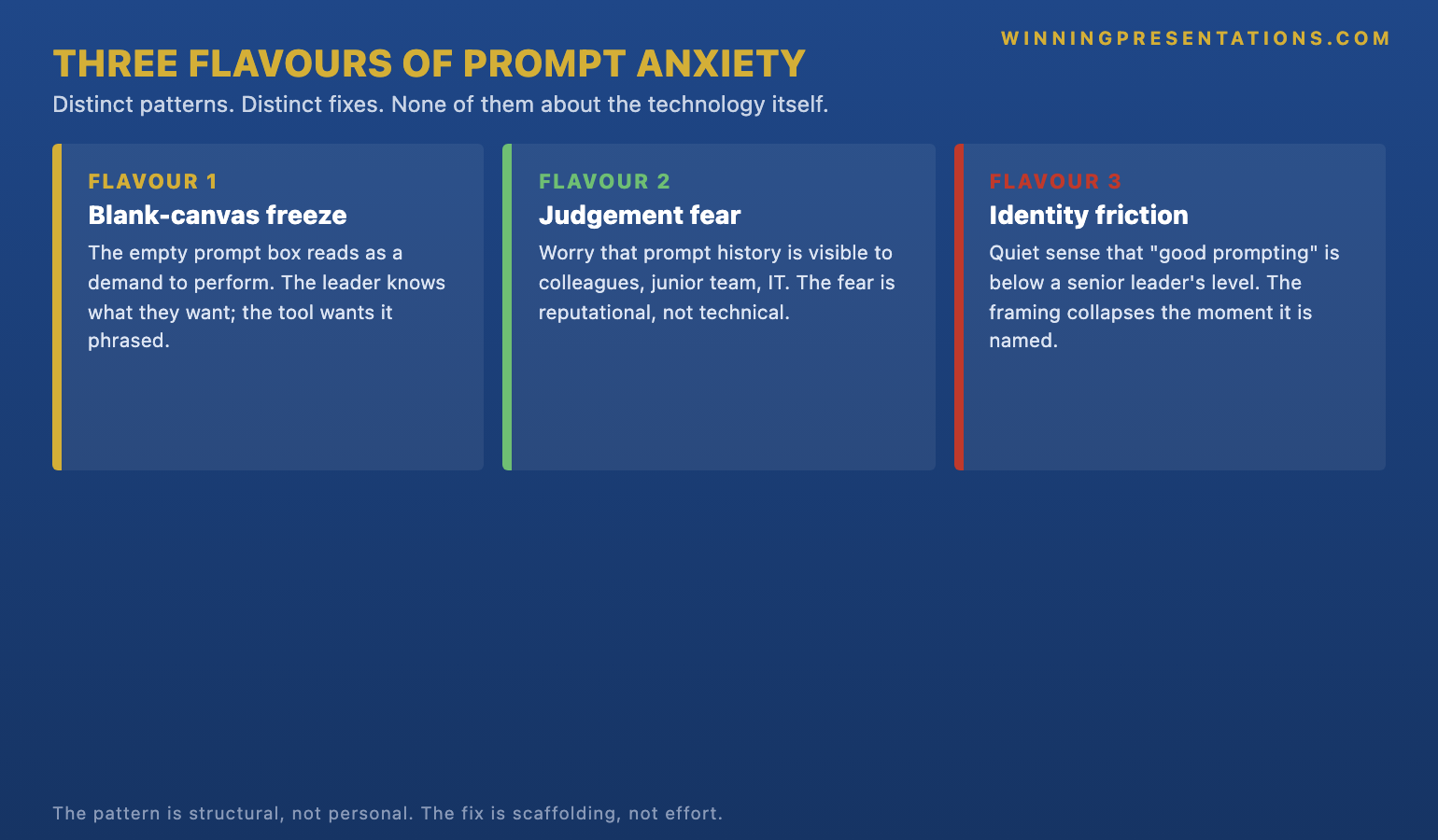

The deeper issue is that a generic template assumes a polished narrative will carry the room. It will not. Investors at seed and Series A are reading for one thing: can this team execute? The slide architecture either signals competence and clarity in the first thirty seconds, or it does not. A founder who opens with “the problem” instead of the recommendation buries the lead. A founder who shows a pretty traction chart without explicit conversion logic loses the partner who reads decks for a living. Free templates optimise for visual polish; investor decks need structural rigour.

A Slide System That Works for Investor Audiences

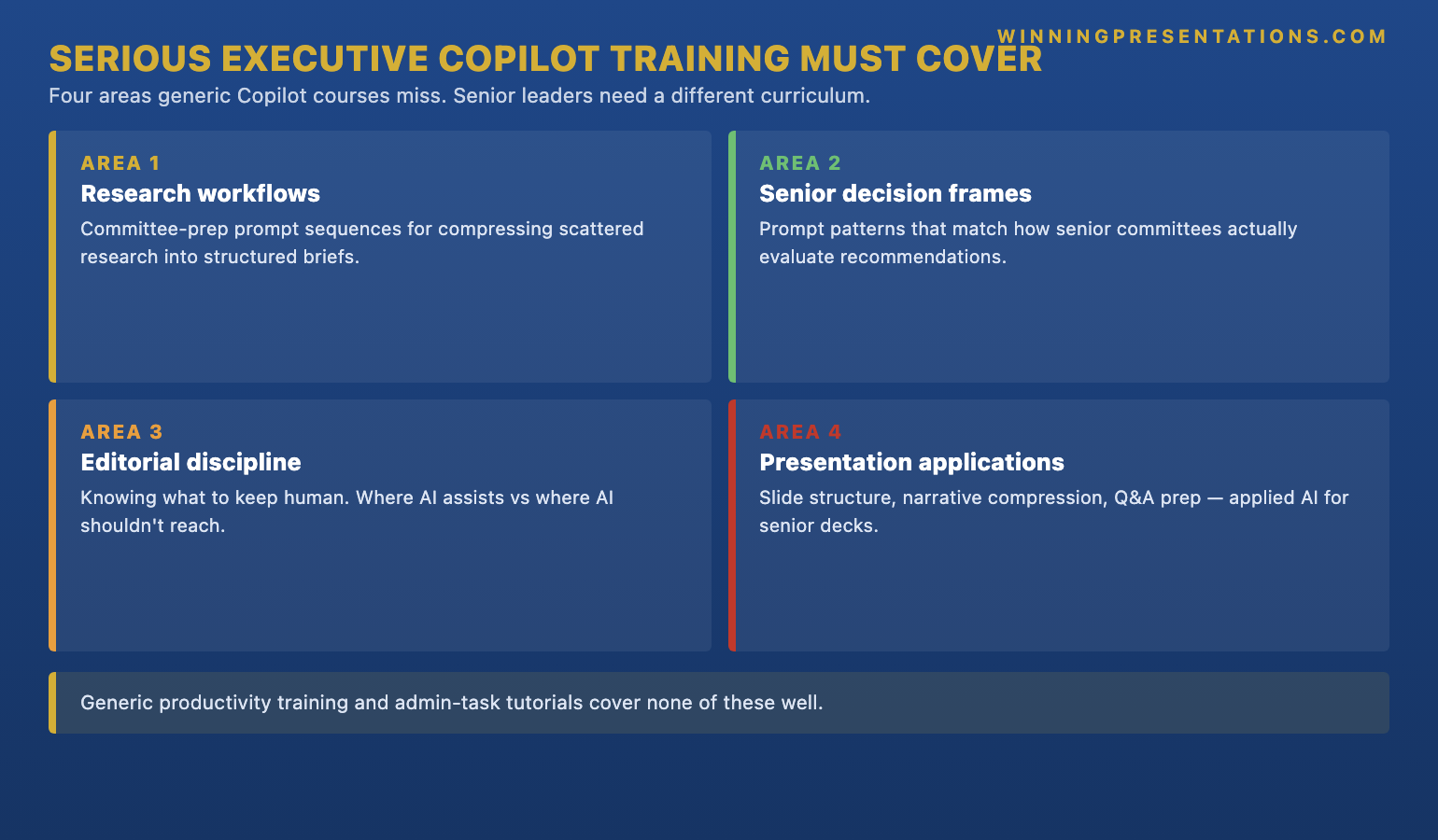

The Executive Slide System is structured around how senior decision-makers — including investors — take in information. The templates start with the recommendation and the ask, follow with the supporting evidence, and finish with implications and the decision frame. The 16 scenario playbooks adapt that core structure to specific situations, including investor pitches, capital requests, and board approvals — situations where structure decides whether the proposal lives or dies.

It was built by Mary Beth Hazeldine, who spent 24 years in corporate banking at JPMorgan Chase, PwC, Royal Bank of Scotland, and Commerzbank before taking over Winning Presentations in 2023. The slide structures draw on the presentations she designed and advised on for capital allocators, investment committees, and senior executives. The deliverables are practical: editable slide files, scenario-by-scenario walkthroughs, and AI prompts for ChatGPT and Microsoft Copilot to help you populate the templates with your real numbers and narrative quickly. The executive slide templates overview is a useful broader reference if you want to compare the system against a generic template kit.

What the Download Includes

- 26 slide templates — covering recommendation slides, market and evidence slides, traction summaries, financial frames, risk slides, and decision-asks designed for senior audiences

- 93 AI prompts — for ChatGPT and Microsoft Copilot, mapped to each template and scenario so you can populate slides with your real content fast

- 16 scenario playbooks — including investor pitches, capital requests, board approvals, and the other situations founders face when raising or reporting

- Master checklist — pre-meeting review of structure, evidence, and Q&A readiness before you walk into the partner meeting

- Framework reference — the underlying structure principle behind the templates, so you can adapt them when an investor conversation does not fit a playbook exactly

- Three-file delivery — instant download, no subscription, no recurring charge

Price: £39 — instant download, single payment.

Build a Pitch Deck That Earns the Second Meeting

The Executive Slide System gives you the senior-level structure, the scenario playbooks, and the AI prompts to turn your raise narrative into a deck investors will read past slide three — without rebuilding from a blank slide every time you adapt to a new fund.

- 26 templates and 16 scenario playbooks for investor pitches, capital requests, and senior reviews

- 93 AI prompts for ChatGPT and Microsoft Copilot, mapped to each template

- Master checklist and framework reference so the system adapts to each investor conversation

- £39, instant download, single payment, no subscription

Get The Executive Slide System → £39

Designed for senior professionals, including founders presenting to investment committees and partners

How Investor Decks Differ From Internal Pitch Decks

A founder pitching to a partner is in a different room than a founder pitching to their own board. Internal pitch decks can assume context — the audience already understands the product, the market, and the team. Investor decks have to establish all three in the first five slides without sounding like a sales pitch. The templates account for this. The recommendation slide names the round and the ask in one line. The market slide is sized in a way that survives diligence rather than the inflated numbers that get torn apart in the second meeting.

Investor partners read pattern. They have seen hundreds of decks in the same shape, and they pattern-match against the ones that worked. A clean recommendation, a defensible market sizing, an honest traction frame, and a credible team slide signal a founder who has thought it through. Sloppy or inflated framing signals the opposite — and the meeting is lost before Q&A. The professional presentation course overview covers the underlying principle behind why structure carries more weight than design.

Stop rebuilding the deck for every fund you meet.

The Executive Slide System gives you the templates, scenario playbooks, and AI prompts to adapt your investor narrative for each fund without restarting from a blank slide. £39, instant download.

Is This the Right Template for You?

The Executive Slide System is designed for you if:

- You are a founder preparing for seed, Series A, or growth-round investor meetings, or other senior-level pitch situations

- You want a structured system that adapts across investor conversations, not a single deck or a stylised PowerPoint theme

- You face multiple senior audiences — investors one week, your own board the next, a strategic partner after that — and need templates that cover the range

- You use ChatGPT or Microsoft Copilot and want AI prompts mapped to specific slide tasks like drafting market frames or structuring traction logic

- You prefer a single-payment download to a subscription template tool

It is probably not the right fit if:

- You need pre-designed brand graphics and a stylised theme rather than a structural template kit

- You want a bespoke pitch deck design service or one-on-one coaching session

- Your primary need is delivery confidence or anxiety management rather than slide structure

- You are pitching to a consumer or social-media audience rather than senior decision-makers

If the fit looks right and you want context on how the templates work in a related senior scenario, the board presentation course overview walks through one of the playbook scenarios in more detail.

One payment, instant download, yours to keep.

No subscription, no recurring charge, no expiry. Download today, edit the templates for your raise, and use the same system across every investor conversation. The Executive Slide System — 26 templates, 93 AI prompts, 16 scenario playbooks. £39, single payment.

Frequently Asked Questions

Is the pitch deck template available as an instant download?

Yes. The Executive Slide System is delivered as an instant download from Gumroad for £39, single payment. Buyers receive three files — templates, AI prompts, scenario playbooks, master checklist, and framework reference. There is no subscription and no recurring charge. The same system serves founders raising in the UK, Europe, North America, and other markets.

Does the template cover seed, Series A, and growth-round pitches?

The templates are structural, not stage-specific. The recommendation, market, traction, financial, and decision-ask slides apply across raise stages — what changes between seed and growth is the substance and the specificity of the supporting evidence. The 16 scenario playbooks include investor pitches, capital requests, and board approvals, so the system covers the conversations a founder has across the company life-cycle.

How are the AI prompts used with the pitch deck templates?

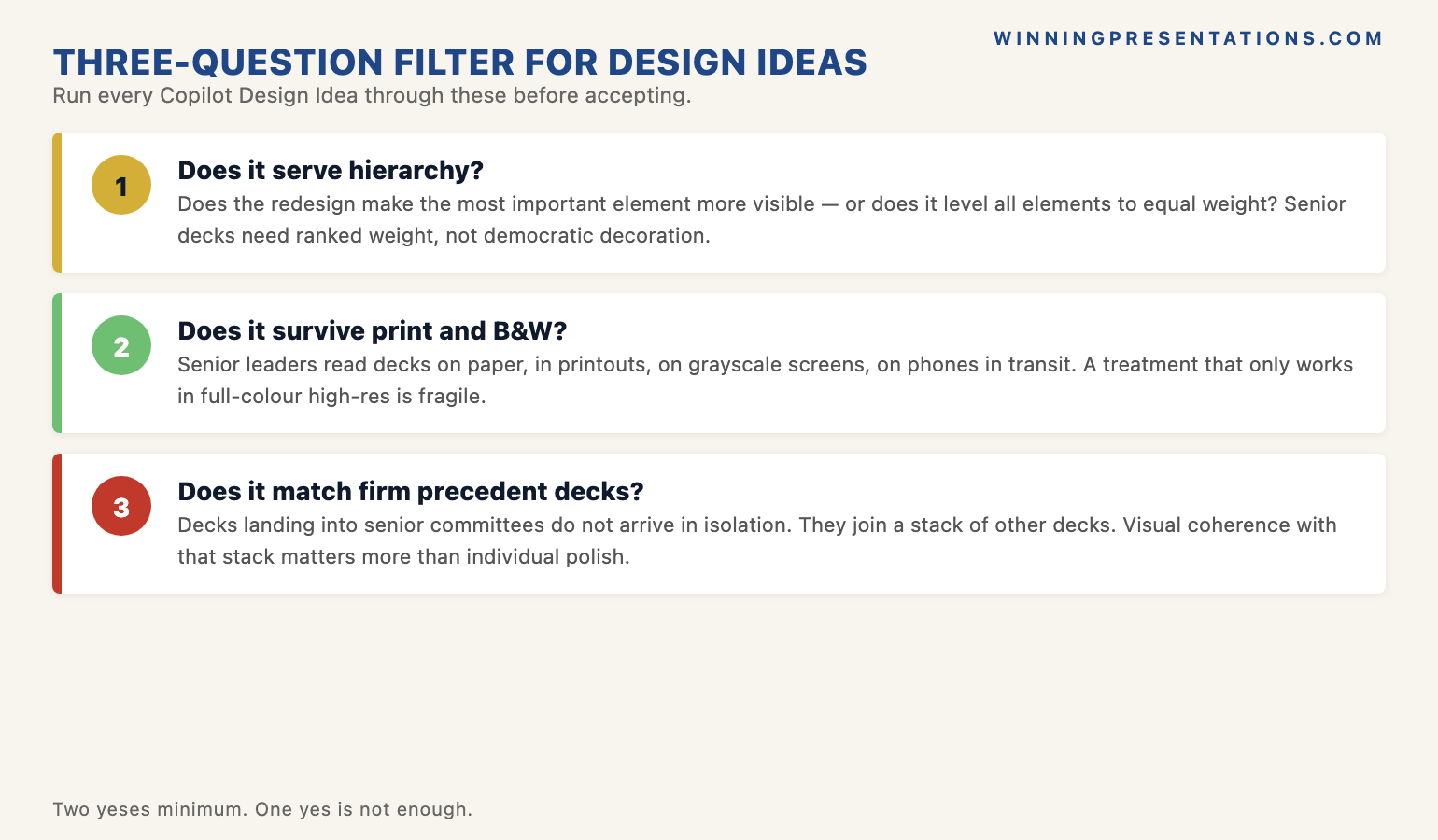

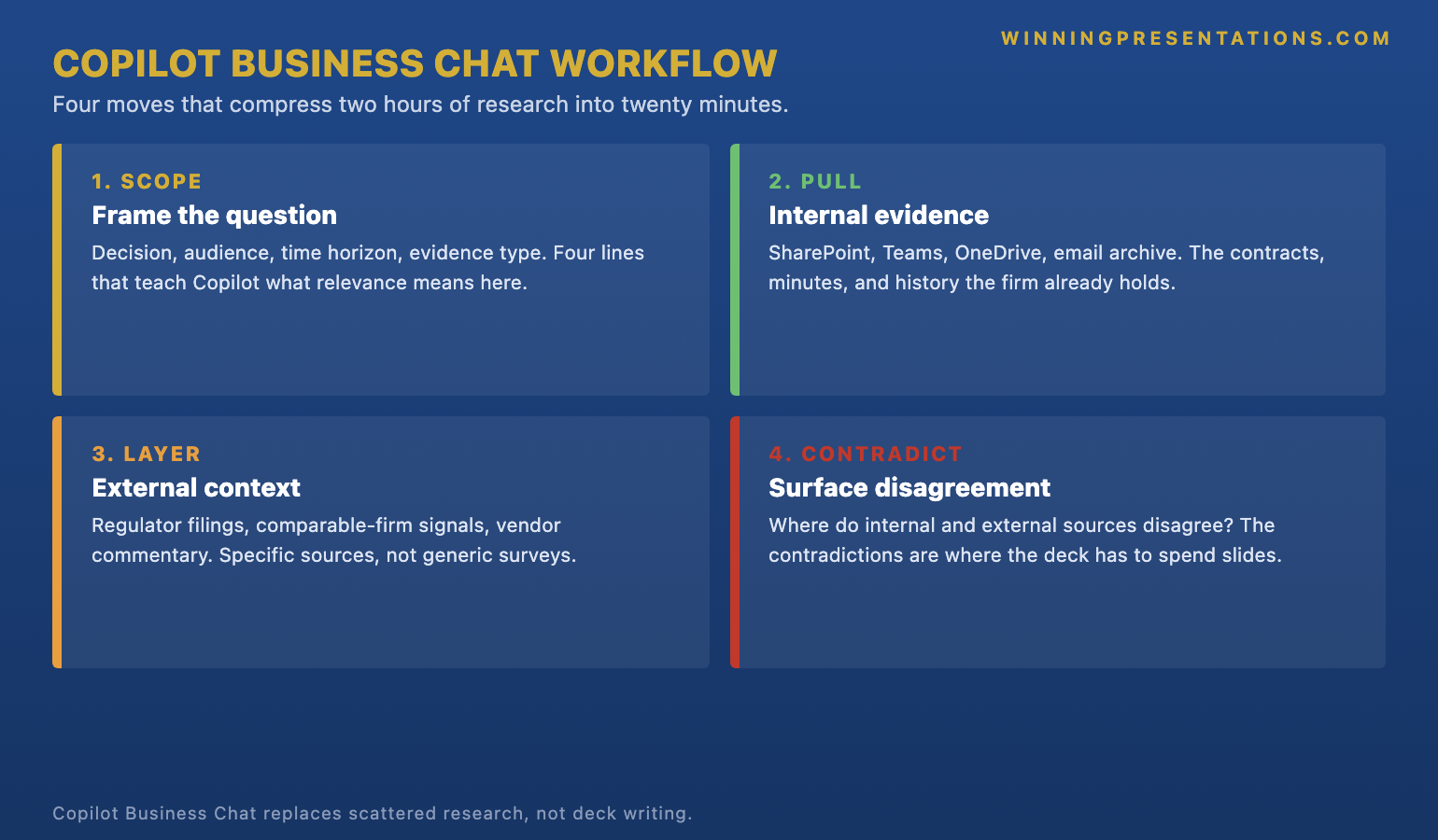

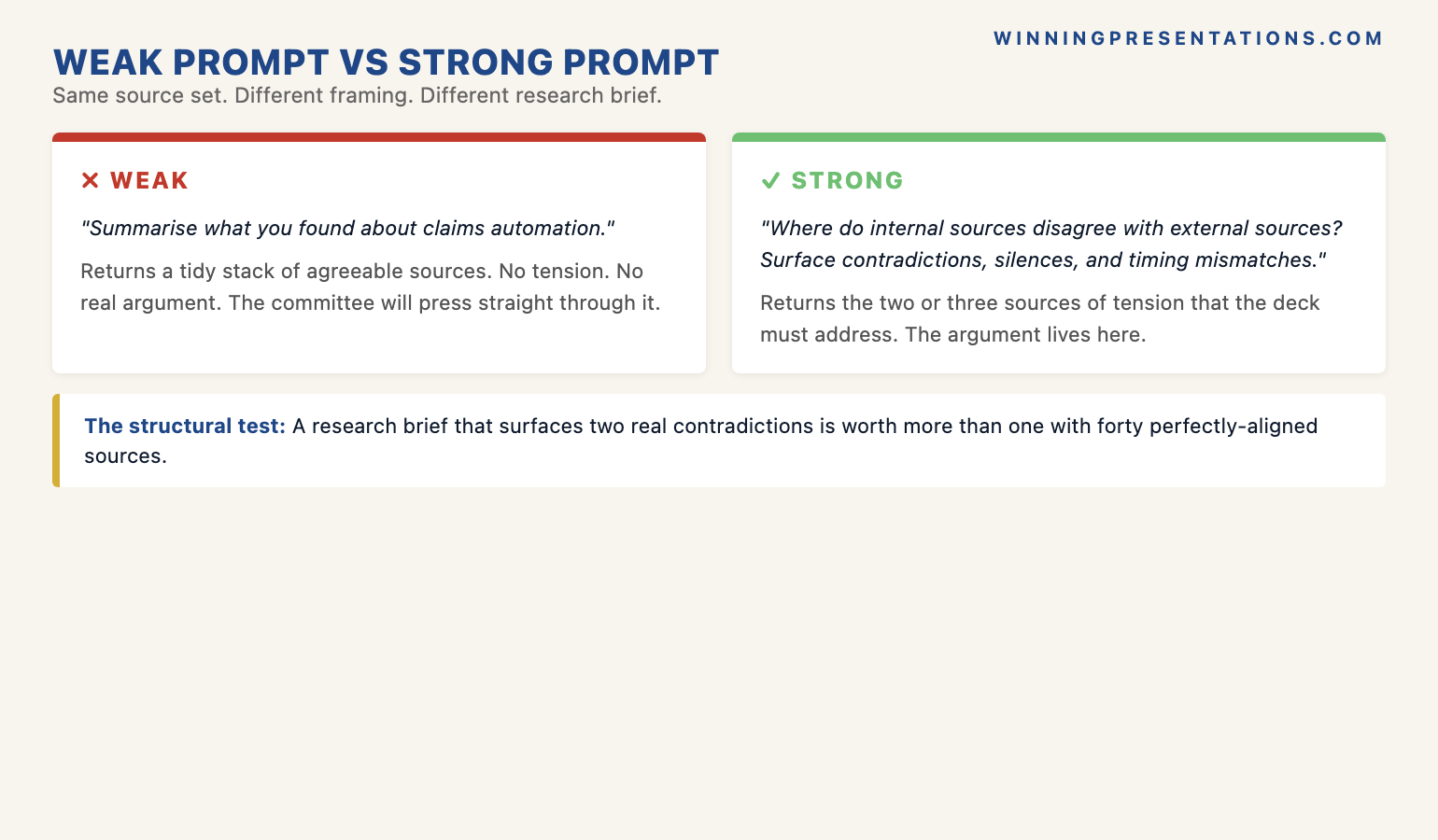

There are 93 AI prompts for ChatGPT and Microsoft Copilot, mapped to specific templates and scenarios. They cover tasks like drafting recommendation language, structuring traction evidence, sizing the market defensibly, generating Q&A banks for partner meetings, and stress-testing arguments. You paste the prompt into your AI tool, add the relevant context from your situation, and use the output to populate the template. The prompts assume no prior AI experience — instructions are step-by-step.

Will the templates work in PowerPoint, Keynote, and Google Slides?

The templates are delivered in editable formats designed to work with PowerPoint and equivalent slide software including Keynote and Google Slides. The structure is what carries the system, not the visual styling — so even if your startup has a brand design you want to apply, the System gives you the slide architecture and you dress it in your own visual language.

Is this only for technology startups?

No. The structural principles apply equally to founders in technology, healthcare, financial services, deep tech, consumer, and B2B services. What matters is the audience: anyone presenting to investors, an executive committee, or a senior steering group benefits from the same architecture.

The Winning Edge — weekly newsletter for senior professionals

Short, practical essays on executive slides, investor and boardroom communication, and AI-assisted preparation. One email a week.

About the Author

Mary Beth Hazeldine is the Owner & Managing Director of Winning Presentations. With 24 years of corporate banking experience at JPMorgan Chase, PwC, Royal Bank of Scotland, and Commerzbank, she advises senior professionals across financial services, healthcare, technology, and government on structuring presentations for investor meetings, board approvals, executive committees, and capital requests.